Photogrammetry Reconstruction

photogrammetry softwares

The {stack-exchange} post show a free workflow confirmed by {this post}. Among them, Meshroom and {3DF Zephyr Free} seem promising.

Meshroom photogrammetry reconstruction

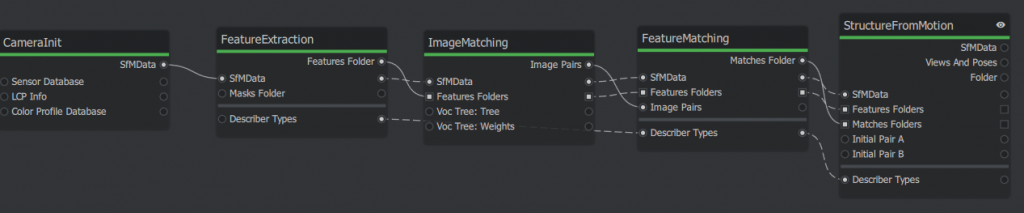

The graphic programming is saved as json file, thus use Gemini (the basic Free Fast version) to write a minimal reconstruction to get point cloud, i.e., structure from motion, sfm. The nodes are like this

The corresponding script are attached below. Copy and save it as a .mg file then open it as a project in the meshroom GUI. First time running, it may pop upgrade nodes, just agree. There are may dynamic placeholder will be automatically hashed, e.g., {cache}/{nodeType}/{uid0}.

A trick is to clear pending status (in the “⋮” in graphic editor header) and remove all images before passing to Gemini. Because the image info takes a lot of lines.

Source code

{

"header": {

"pipelineVersion": "2.2",

"releaseVersion": "2023.3.0",

"fileVersion": "1.1",

"template": false,

"nodesVersions": {

"CameraInit": "9.0",

"StructureFromMotion": "3.3",

"FeatureExtraction": "1.3",

"ImageMatching": "2.0",

"FeatureMatching": "2.0"

}

},

"graph": {

"CameraInit_1": {

"nodeType": "CameraInit",

"position": [0, 0],

"parallelization": { "blockSize": 0, "size": 0, "split": 1 },

"uids": { "0": "961e54591174ec5a2457c66da8eadc0cb03d89ba" },

"internalFolder": "{cache}/{nodeType}/{uid0}/",

"inputs": {

"viewpoints": [],

"intrinsics": [],

"sensorDatabase": "${ALICEVISION_SENSOR_DB}",

"defaultFieldOfView": 45.0,

"viewIdMethod": "metadata"

},

"outputs": {

"output": "{cache}/{nodeType}/{uid0}/cameraInit.sfm"

}

},

"FeatureExtraction_1": {

"nodeType": "FeatureExtraction",

"position": [200, 0],

"parallelization": { "blockSize": 40, "size": 0, "split": 0 },

"uids": { "0": "7e0174439ccb598ec8c32e23cf2a1da4cd6ab00c" },

"internalFolder": "{cache}/{nodeType}/{uid0}/",

"inputs": {

"input": "{CameraInit_1.output}",

"describerTypes": ["dspsift"],

"describerPreset": "normal",

"describerQuality": "low",

"forceCpuExtraction": true

},

"outputs": {

"output": "{cache}/{nodeType}/{uid0}/"

}

},

"ImageMatching_1": {

"nodeType": "ImageMatching",

"position": [400, 0],

"parallelization": { "blockSize": 0, "size": 0, "split": 1 },

"uids": { "0": "896a53c0e4aaf5e02ec421857f1f30439cfe3950" },

"internalFolder": "{cache}/{nodeType}/{uid0}/",

"inputs": {

"input": "{CameraInit_1.output}",

"featuresFolders": ["{FeatureExtraction_1.output}"],

"method": "VocabularyTree",

"tree": "${ALICEVISION_VOCTREE}"

},

"outputs": {

"output": "{cache}/{nodeType}/{uid0}/imageMatches.txt"

}

},

"FeatureMatching_1": {

"nodeType": "FeatureMatching",

"position": [600, 0],

"parallelization": { "blockSize": 20, "size": 0, "split": 0 },

"uids": { "0": "b446f57fb541abb70e8dc68c47a37e87ec84beff" },

"internalFolder": "{cache}/{nodeType}/{uid0}/",

"inputs": {

"input": "{ImageMatching_1.input}",

"featuresFolders": "{ImageMatching_1.featuresFolders}",

"imagePairsList": "{ImageMatching_1.output}",

"describerTypes": "{FeatureExtraction_1.describerTypes}"

},

"outputs": {

"output": "{cache}/{nodeType}/{uid0}/"

}

},

"StructureFromMotion_1": {

"nodeType": "StructureFromMotion",

"position": [800, 0],

"parallelization": { "blockSize": 0, "size": 0, "split": 1 },

"uids": { "0": "1d7db1354dab04f156d46106b0b6e27aa0570be3" },

"internalFolder": "{cache}/{nodeType}/{uid0}/",

"inputs": {

"input": "{FeatureMatching_1.input}",

"featuresFolders": "{FeatureMatching_1.featuresFolders}",

"matchesFolders": ["{FeatureMatching_1.output}"],

"describerTypes": "{FeatureMatching_1.describerTypes}",

"computeStructureColor": true

},

"outputs": {

"output": "{cache}/{nodeType}/{uid0}/sfm.abc"

}

}

}

}Code language: JSON / JSON with Comments (json)Click Start on top of the panel. The nodes will show different colors: Green – Done; Yellow – Running; Blue – Queueing; Red – Error.

After the SfM node is done, find the reconstructed point cloud in {project-file-dir}/MeshroomCache/StructureFromMotion/{latest-UID}/sfm.abc

The .abc file is efficient for visual effect (VFX) but hard to visualize, so convert it to .ply file using the built-in converter.

# add it to env path in powershell if not yet

$env:ALICEVISION_ROOT = "D:/AYX/Softwares/Meshroom/aliceVision"

## convert .abc to ply

.\aliceVision_exportColoredPointCloud.exe --input "{your_path}\sfm.abc" --output "{your_path}\{file_name}.ply"Code language: PHP (php)I use python pyvista to visualize them, the colors of the dots are saved under the ‘RGB’ entry.

Python Source Code

import pyvista as pv

import numpy as np

pv.set_jupyter_backend('html')

# Load the PLY you exported

cloud = pv.read(r"{your_file}.ply")

# CRITICAL: Re-center the data to (0,0,0) so you can see it

# We subtract the average position of all points

center = np.mean(cloud.points, axis=0)

cloud.points = cloud.points - center

print(f"Data centered by subtracting: {center}")

# use this to find the color key

print(f'color stored in [{cloud.array_names}]')

# Visualize with PyVista

pl = pv.Plotter()

# Scalars="rgb" ensures the trees and pit look real

pl.add_mesh(cloud, scalars="RGB", rgb=True, point_size=3.0, render_points_as_spheres=True)

pl.add_axes()

pl.show_grid()

pl.show()

pl.export_html(r'{your_output_path}\preview.html')Code language: PHP (php)Example output show below with an orthophoto for reference. Cameras are colored as green, too. Left Click to orbit, shift + LMouse to pan, Ctrl+LM to pinch rotate, scroll to zoom.